RAG Implementation Services

Build accurate, context-aware AI systems by combining Large Language Models with your proprietary knowledge base. Custom RAG pipelines for enterprise document Q&A, support automation, and knowledge management.

Retrieval-Augmented Generation for Enterprise AI

Retrieval-Augmented Generation (RAG) is a cutting-edge AI architecture that enhances Large Language Models by connecting them to your proprietary knowledge base. Instead of relying solely on the model's training data, RAG retrieves relevant information from your documents, databases, or websites and uses that context to generate accurate, up-to-date, and verifiable responses.

At OrcaMinds, we build custom RAG pipelines that transform how your business accesses information. Whether you need a document Q&A system for internal knowledge management, an AI support agent trained on your product manuals, or a research assistant that queries your technical documentation, our RAG solutions deliver factual, context-aware answers while eliminating hallucination risks.

Our RAG Capabilities

Document Ingestion & Processing

Ingest PDFs, Word docs, websites, Notion, Confluence, and more. Intelligent chunking and preprocessing for optimal retrieval.

Vector Database Integration

Store and search embeddings using Pinecone, Weaviate, Qdrant, Chroma, or PostgreSQL with pgvector for scalable similarity search.

Hybrid Search & Reranking

Combine semantic search with keyword matching. Advanced reranking strategies to deliver the most relevant context to your LLM.

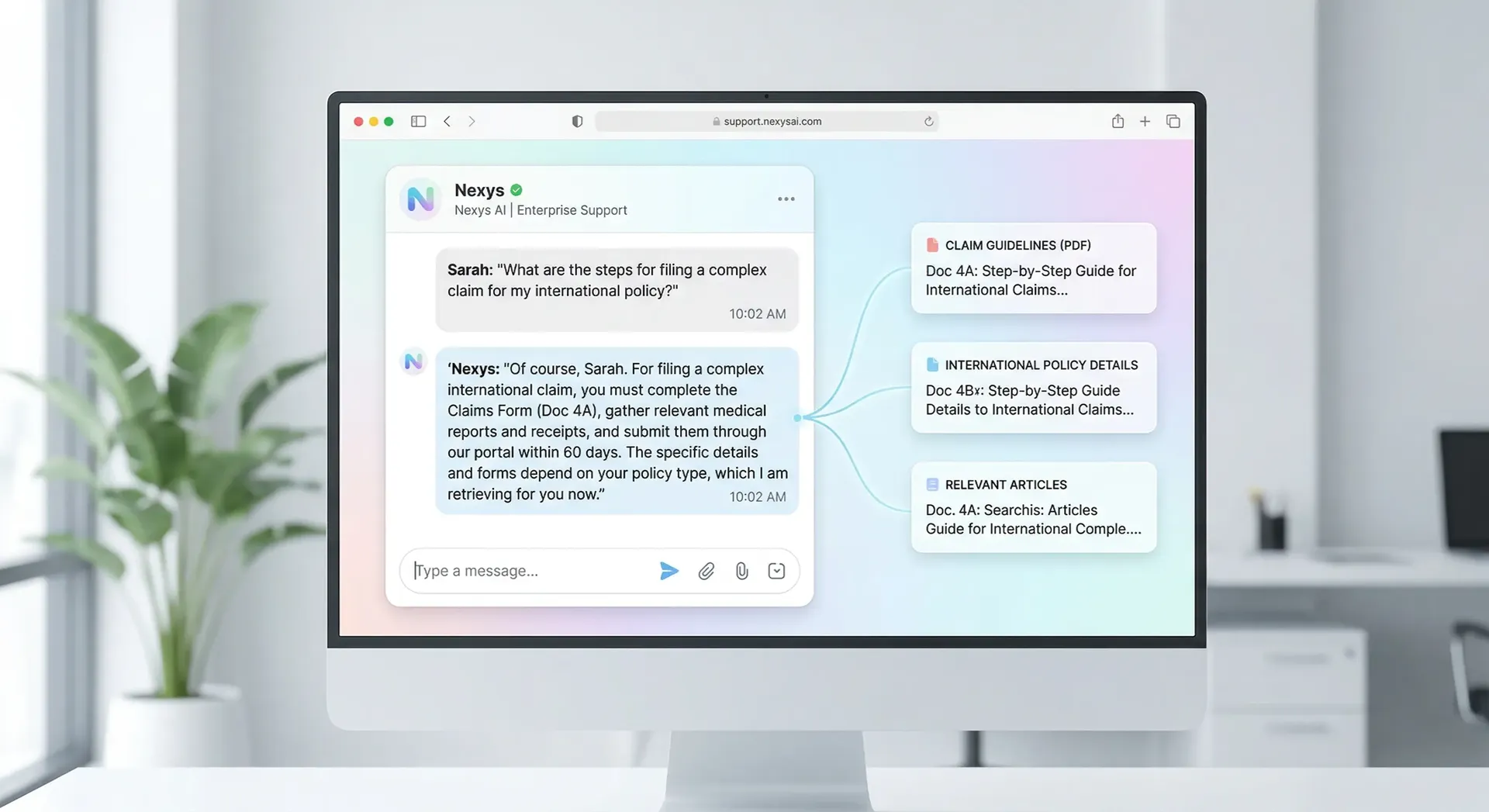

Citation & Source Tracking

Every response includes source references. Build trust with verifiable answers that link back to original documents.

Real-Time Data Sync

Keep your RAG pipeline updated with automatic ingestion when new documents are added or existing ones change.

Access Control & Security

Role-based access to documents. Ensure users only retrieve information from authorized sources.

Perfect Use Cases for RAG

Enterprise Knowledge Management

Internal Q&A on policies, HR docs, technical manuals

AI Customer Support

Support bots trained on product docs and FAQs

Research Assistant

Query research papers, technical documentation, case studies

Legal & Compliance

Contract analysis, regulatory document Q&A

E-Commerce Product Q&A

Answer product questions from catalogs and specifications

Education & E-Learning

Course material Q&A, personalized tutoring

Our RAG Development Process

Knowledge Base Audit & Strategy

We analyze your documents, identify key information sources, and design the optimal chunking and indexing strategy.

Vector Database & Embedding Pipeline

We set up vector databases, create embeddings using state-of-the-art models, and build ingestion pipelines.

Retrieval & Generation Orchestration

We implement retrieval strategies, prompt engineering, and LLM orchestration using LangChain or LlamaIndex.

Deployment, Monitoring & Refinement

API deployment, user feedback loops, and continuous evaluation to improve retrieval relevance and response quality.