LLM Development Services

Build custom Large Language Models tailored to your business. Fine-tuning, prompt engineering, deployment, and integration of state-of-the-art LLMs.

Custom Large Language Models for Your Business

Large Language Models (LLMs) are transforming how businesses interact with data and customers. At OrcaMinds, we help you harness the power of LLMs through custom fine-tuning, prompt engineering, and seamless deployment. Our expertise spans GPT-4, LLaMA, Claude, Gemini, and open-source models like Llama 3, Mistral, and Falcon.

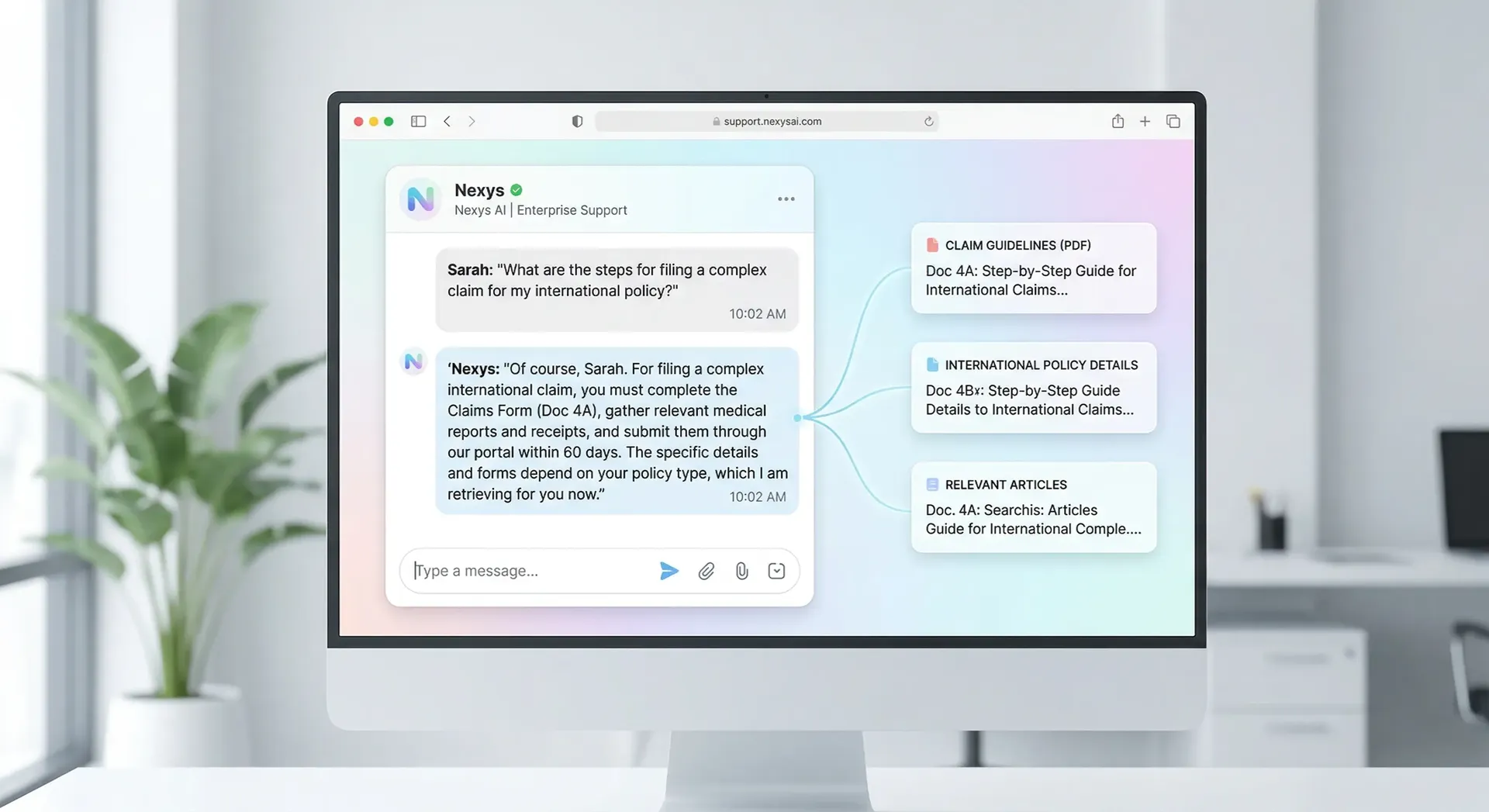

Based in Ahmedabad, India, we build LLM solutions for content generation, code assistance, document analysis, customer support, and knowledge management. Whether you need to fine-tune a model on your proprietary data, optimize prompts for specific tasks, or deploy LLMs at scale, our team delivers production-ready solutions.

Our LLM Capabilities

Custom Fine-Tuning

Fine-tune pre-trained LLMs on your proprietary data to improve accuracy, relevance, and domain-specific knowledge.

Prompt Engineering

Design and optimize prompts to get the most accurate, relevant, and consistent responses from LLMs.

Model Deployment

Deploy LLMs on cloud (AWS, Azure, GCP) or on-premises with scalable APIs for production use.

RAG Integration

Combine LLMs with your knowledge base using Retrieval-Augmented Generation for accurate, context-aware responses.

LLM Evaluation & Monitoring

Continuous evaluation of model performance, hallucination detection, and response quality monitoring.

Cost Optimization

Optimize token usage, caching strategies, and model selection to reduce operational costs.

LLM Models We Work With

GPT-4 / GPT-4o

OpenAI's flagship models

Claude 3

Anthropic's safe AI

Gemini

Google's multimodal LLM

LLaMA 3

Meta's open-source model

Mistral

Efficient open-source LLM

Falcon

High-performance open model

Our LLM Development Process

Requirements & Use Case Analysis

We identify your specific LLM use cases, data requirements, and success metrics.

Model Selection & Fine-Tuning

We select the optimal base model and fine-tune it on your proprietary data for maximum accuracy.

Integration & Deployment

We deploy your custom LLM via APIs, chat interfaces, or integrate into existing applications.

Monitoring & Optimization

Continuous monitoring, performance optimization, and regular model updates.